AI

AIThe Rise of Small AI: Qwen 3.5 and the Local Revolution

Alibaba's new models bring frontier-level reasoning to standard consumer hardware without the cloud.

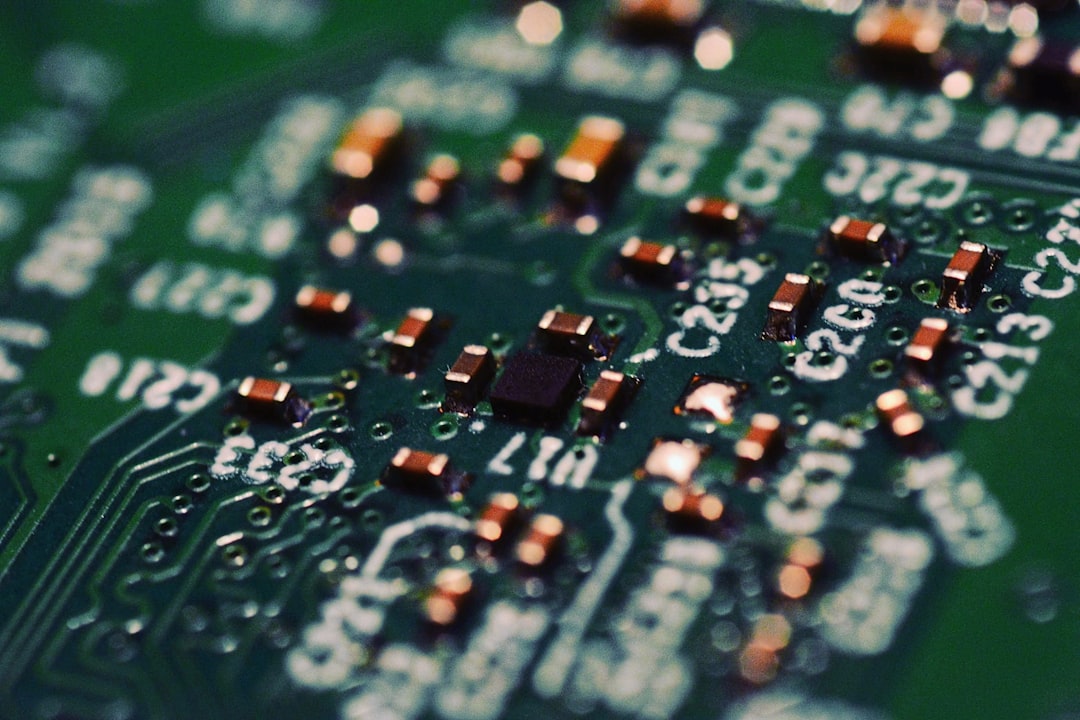

The release of Alibaba’s Qwen 3.5 series on Hugging Face marks a definitive pivot in the artificial intelligence industry, shifting the focus from massive server farms to the devices in our pockets. These Small Language Models (SLMs) offer native multimodality and reasoning capabilities that, until recently, were only possible through expensive cloud subscriptions. For the first time, high-tier intelligence is becoming a truly decentralized personal utility.

The 9B Powerhouse and the Hardware Shift

The standout of the new lineup is the 9B parameter model, which delivers STEM performance comparable to the industry-leading Claude 3.7 Sonnet from early 2025. This achievement is remarkable because the 9B model can run locally on standard consumer hardware using only about 5GB of RAM. By utilizing advanced quantization—a process that compresses a model's weights to reduce memory usage—users can achieve elite reasoning without needing a dedicated graphics card (GPU).

This efficiency eliminates the need for $20-per-month cloud subscriptions for many common tasks like coding, data analysis, or science tutoring. Instead of sending sensitive data to a remote server, a standard laptop can now handle complex queries entirely offline. The performance gap between open-weight models and proprietary cloud giants has narrowed significantly, often trailing the state-of-the-art by only a few months.

Furthermore, the Qwen 3.5 series represents a jump in architecture through native multimodality. While previous small models often relied on external 'adapters' to process images or video, these models are designed from the ground up to handle multiple data types. This unified approach allows the 0.8B model to run smoothly on flagship smartphones, requiring less than 1GB of available RAM to process both text and visual information.

The Intelligence Plateau and Data Sovereignty

As model performance begins to converge across the industry, we are approaching what many experts call an 'intelligence plateau.' For the vast majority of daily tasks, the difference between a trillion-parameter model and a 9B model is becoming imperceptible to the average user. When several different models can solve a complex coding problem or explain a physics concept with equal accuracy, the competitive advantage shifts from raw power to local accessibility.

This shift has significant implications for the high-end hardware market. If a basic CPU and 5GB of RAM can deliver 'Sonnet-level' intelligence, the massive VRAM found in expensive professional GPUs may become overkill for most AI enthusiasts. As influencer @JohnGalt_is_www noted in a recent viral discussion, high-end gaming cards might eventually feel like 'paperweights' as training efficiency outpaces the need for massive local compute for inference.

Beyond hardware costs, the primary driver of this revolution is the concept of data sovereignty. Local execution ensures that private documents, proprietary codebases, and personal queries never leave the physical device. Users no longer have to trust a third-party corporation with their data or worry about API outages and internet connectivity. While cloud giants argue that their future 'frontier' models will still require massive clusters for complex agentic reasoning, the Qwen 3.5 release proves that the AI we use every day is moving back into the hands of the individual.

Qwen 3.5 and Local AI

Keep reading

Tech

TechCloud Warfare: AWS Middle East Data Center Hit in Regional Escalation

Following retaliatory strikes across the UAE, Amazon's primary Middle East data center has gone dark, causing widespread digital disruption.

Tech

TechThe Giant Leaps of Starship: A New Era for Deep Space

SpaceX’s Starship has finally conquered the orbital barrier, marking a pivot point for the Artemis program and the future of heavy-lift spaceflight.