Tech

TechByteDance Is Teaching Machines To Mimic Hollywood By Stealing Its Soul

Seedance 2.0 is a technical marvel that highlights a very human, very ugly problem with AI

Every generation gets the digital apocalypse it deserves, and ours seems to be one where a ByteDance algorithm decides it can make a better Tom Cruise movie than Tom Cruise. Enter Seedance 2.0, the latest text-to-video model that has everyone from VraserX to the SAG-AFTRA union clutching their pearls and firing off cease-and-desists. It is a terrifyingly competent piece of software that proves, once again, that the biggest threat to art isn't lack of talent—it's the sheer efficiency of theft.

The Infinite Machine of Derivative Content

Seedance 2.0 is, objectively, a masterpiece of brute-force engineering. ByteDance has managed to bridge the gap between 'vaguely unsettling digital hallucination' and 'legitimate cinematic output,' offering native audio-visual synchronization and physical interaction that actually feels grounded in reality. It is the kind of model that makes screenwriters like Rhett Reese openly admit that the traditional path to filmmaking is effectively dead. If you can type a prompt and receive a blockbuster sequence in a single pass, the barrier to entry isn't just lowered—it is obliterated.

But let’s talk about the 'insane' quality VraserX mentioned. That quality didn't come from divine inspiration or a sudden breakthrough in sentient AI; it came from feeding the model every piece of copyrighted intellectual property ByteDance could scrape off the internet. The irony is as thick as a Hollywood contract: the very models now being sued into oblivion were trained on the movies, faces, and narratives they are currently designed to replace. When studios scream about ethics, they ignore that their own business model is now being held up as the gold-standard training set for their executioner.

The Hypocrisy of the Studio System

The legal theater playing out between the MPA and ByteDance is the ultimate distraction. While studios like Disney sue to protect their 'IP,' they are simultaneously signing massive licensing deals to let other companies train models on that same IP. The concern isn't really about artistic integrity or the sanctity of the work; it is about who gets to hold the leash. The studios aren't afraid of AI; they are afraid of losing their monopoly on the means of production to an entity that doesn't care about their licensing fees.

Ultimately, the takeaway here isn't about AI capabilities—it’s about the inevitable commoditization of human experience. When you teach a machine to follow 'cinematic rules,' you are just teaching it to repeat the patterns of our own history, stripped of the human context that made those patterns valuable in the first place. History shows us that you can't litigate a technological shift into non-existence; you can only try to own it. The studios will eventually pivot, the copyright laws will be 'updated,' and we’ll all be watching AI-generated movies that feel like a dream you had after eating too much cheese. The technology isn't the problem—our desperate need to replicate the past at a lower cost is.

Seedance 2.0 Conflict Dynamics

Keep reading

Tech

TechElon Musk Claims Tesla Will Build AGI via Atom-Shaping Robots

Musk is rebranding Tesla as an AGI company to distract from sagging vehicle sales, but his latest 'atom-shaping' claim is just a fancy wrapper for an old, unfulfilled promise.

Tech

TechOpenAI Brings Agentic Coding to Windows Ecosystem

OpenAI has expanded its standalone Codex application to Windows, introducing a secure sandbox for multi-agent development projects.

Tech

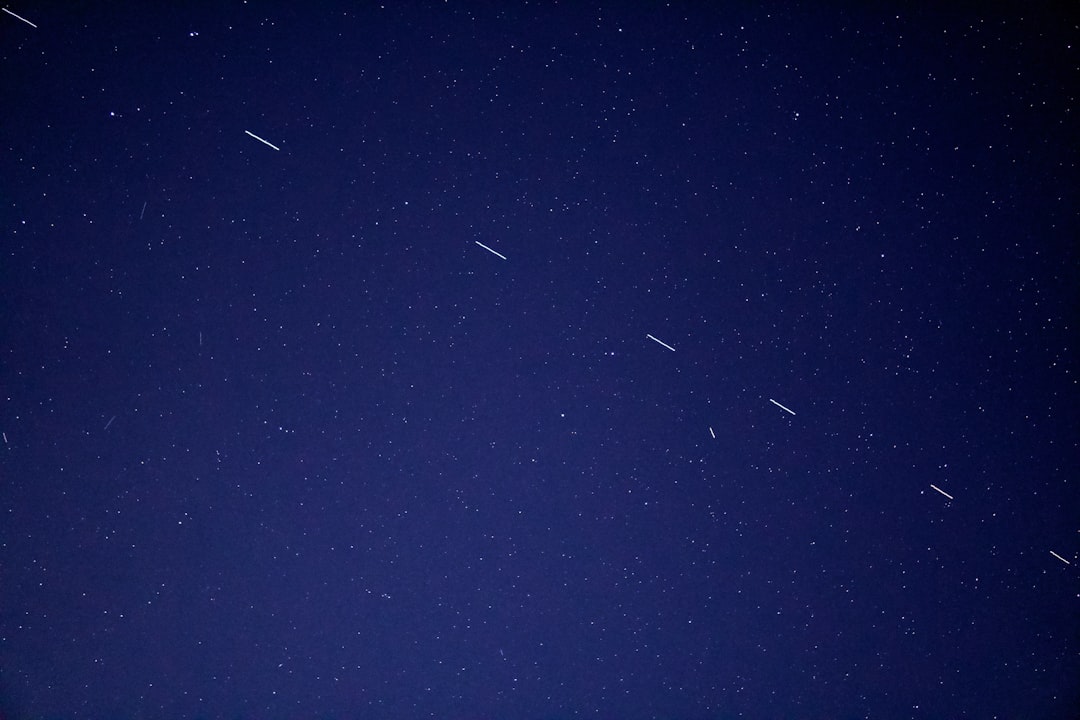

TechStarlink Hits Ten Million Milestone as SpaceX Looks to the Future

SpaceX's Starlink has reached 10 million active users while preparing for a new era of satellite-to-mobile connectivity.