Why Your Next Desktop Might Actually Be a Mini Data Center

The shift from cloud-dependency to high-performance local AI infrastructure for the modern professional.

For the last decade, the tech industry has operated under the assumption that the future belongs entirely to the cloud. We were told that local hardware would eventually become little more than a thin client, a mere window into massive data centers owned by a handful of giants. However, a quiet counter-revolution is taking place in home offices and small studios around the world.

The Economics of Local Intelligence

The primary driver behind this hardware renaissance is a simple matter of economics. While cloud-based AI services offer low barriers to entry, the long-term costs of API calls for high-volume users have become prohibitive. For a startup or a solo researcher running inference tasks twenty-four hours a day, a monthly cloud bill can quickly eclipse the cost of owning the silicon outright.

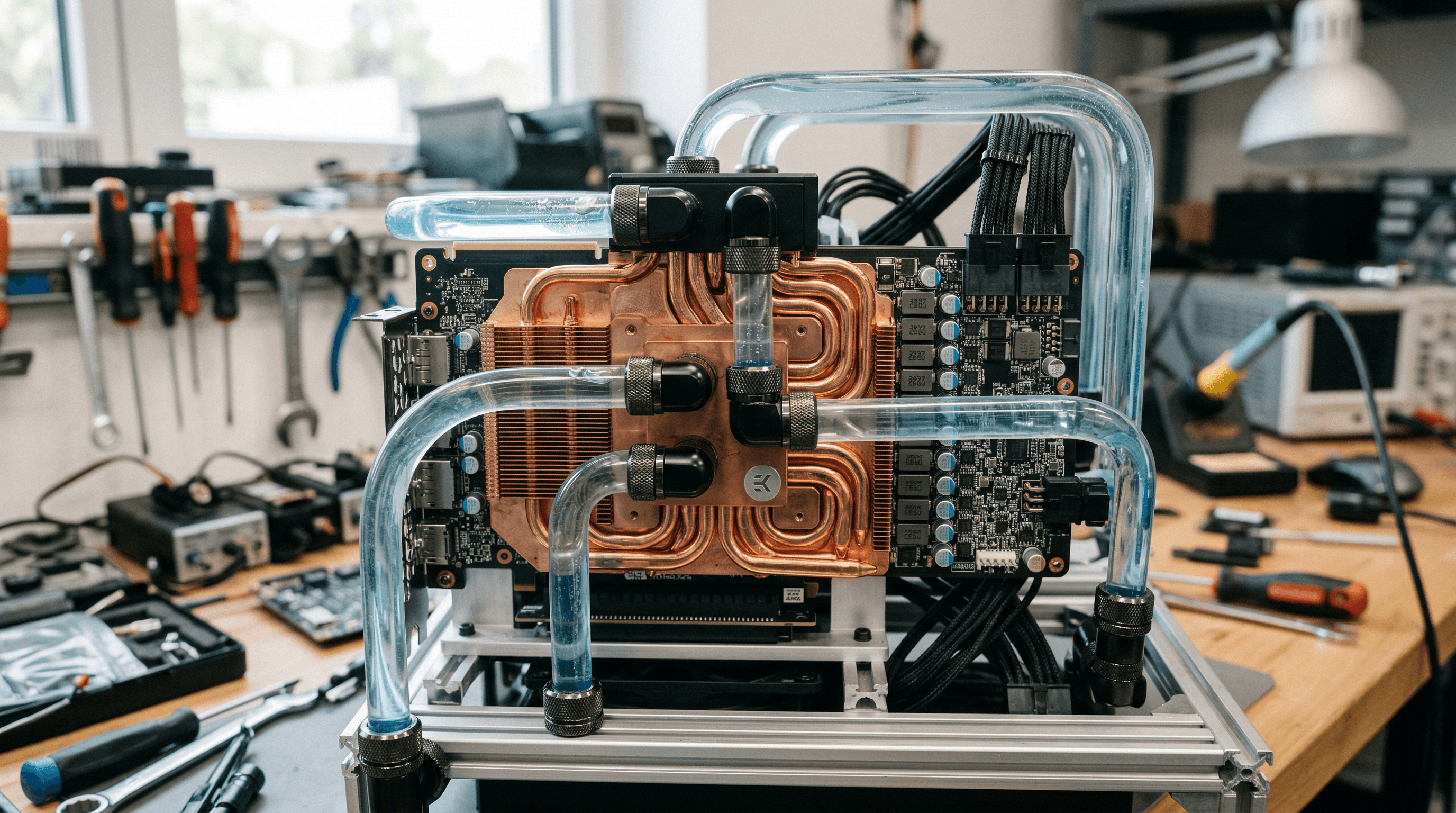

Modern open-source models have become remarkably efficient, allowing prosumer-grade hardware to deliver performance that was previously reserved for enterprise server rooms. By investing in dedicated local clusters—often utilizing interconnected GPUs with high VRAM—users are finding they can recoup their initial investment in less than a year. This isn't just about saving money; it is about reclaiming the means of production in the digital age.

Furthermore, the 'always-on' nature of local hardware eliminates the unpredictability of cloud pricing tiers and rate limits. When you own the compute, you own the priority. This reliability is essential for developers building complex autonomous agents that require constant, low-latency access to processing power without the risk of being throttled by a third-party provider.

Privacy and the Sovereignty of Data

Beyond the financial incentives, a more philosophical shift toward data sovereignty is taking place. In an era where every prompt and document uploaded to a cloud service potentially trains a competitor's model, the value of 'air-gapped' intelligence cannot be overstated. Professionals in law, medicine, and engineering are increasingly turning to local clusters to ensure their proprietary data never leaves their physical premises.

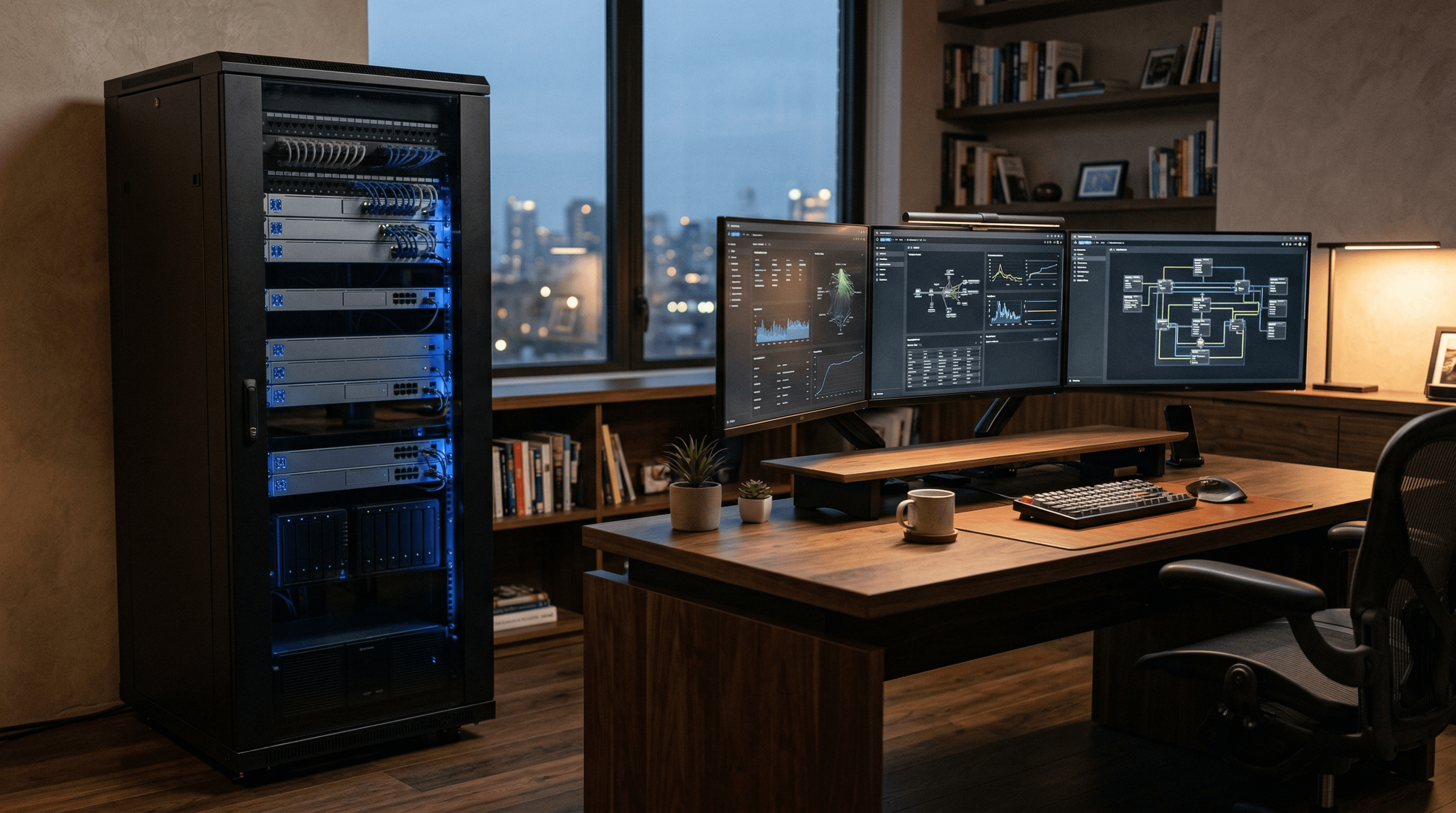

The hardware itself is evolving to meet this demand. We are seeing a move away from loud, power-hungry rack servers toward 'silent' compute modules that fit aesthetically into a modern workspace. These systems leverage advanced liquid cooling and high-bandwidth interconnects to provide massive parallel processing power without the acoustic profile of a traditional data center.

As we look toward the end of the decade, the 'Personal Cloud' will likely become a standard fixture for the high-end professional. This localized infrastructure serves as a private vault for a user’s entire digital life, indexed and analyzed by local AI that knows everything about its owner but tells nothing to the world. It is the ultimate expression of digital privacy: a system that is powerful enough to be useful, but personal enough to be trusted.

The Personal AI Infrastructure Ecosystem

Where is DeepSeek-v4? Inside the Delay of the AI World’s Most Anticipated Disruptor

DeepSeek-v4 was supposed to arrive in mid-February. Instead, the AI community is left with gray-box updates and a deepening mystery involving export controls and hardware snubs.

Beyond the Autocomplete: How AI Agents Are Rewriting the Developer Workflow

As AI code editors like Cursor reach multibillion-dollar valuations, the industry is moving from simple autocomplete to autonomous agents that build entire features.

Apple’s New Budget Play: The Return of the Entry-Level MacBook

Apple is poised to disrupt the student laptop market with a new sub-$800 MacBook powered by iPhone-derived silicon.