The 27B Revolution: How Qwen 3.5 is Dismantling the Big AI Monopoly

Alibaba's latest model offers frontier-class reasoning on consumer-grade hardware.

For years, the narrative of artificial intelligence has been defined by the mantra that bigger is always better. Massive clusters of GPUs and billion-dollar investments seemed like the only path to high-level reasoning and sophisticated coding capabilities. However, the release of Alibaba’s Qwen 3.5-27B is fundamentally shifting that conversation, proving that architectural efficiency can trump sheer scale.

The Efficiency Paradox: Performance Without the Scale

Released in late February 2026, Qwen 3.5-27B has quickly become the sleeper hit of the open-weights community. Despite its relatively modest 27-billion parameter count, this model is delivering intelligence that rivals models many times its size. According to recent data from Artificial Analysis, the model holds an Intelligence Index of 42.0, ranking it at the top of its size class and allowing it to trade blows with industry giants like Grok 4 and Claude 4.5 Haiku. \n\nThe secret to this performance lies in its hybrid architecture, which utilizes Gated DeltaNet and Gated Attention layers in a 3:1 ratio. This design choice optimizes the model for long-context reasoning, enabling it to achieve a staggering 85.8% on the GPQA Diamond benchmark—a test designed to measure graduate-level scientific reasoning. It also excels in coding, matching the performance of GPT-5 mini on the SWE-bench Verified leaderboard with a score of 72.4. \n\nPerhaps most impressive is its performance on 'Humanity’s Last Exam' (HLE), where it scored 22.2%. This benchmark is considered near state-of-the-art for open-weights models and signals that the gap between localized, accessible AI and massive, centralized 'BigAI' is closing faster than anticipated. For developers, this means the ability to integrate advanced agentic capabilities into their workflows without the overhead of enterprise-scale infrastructure.

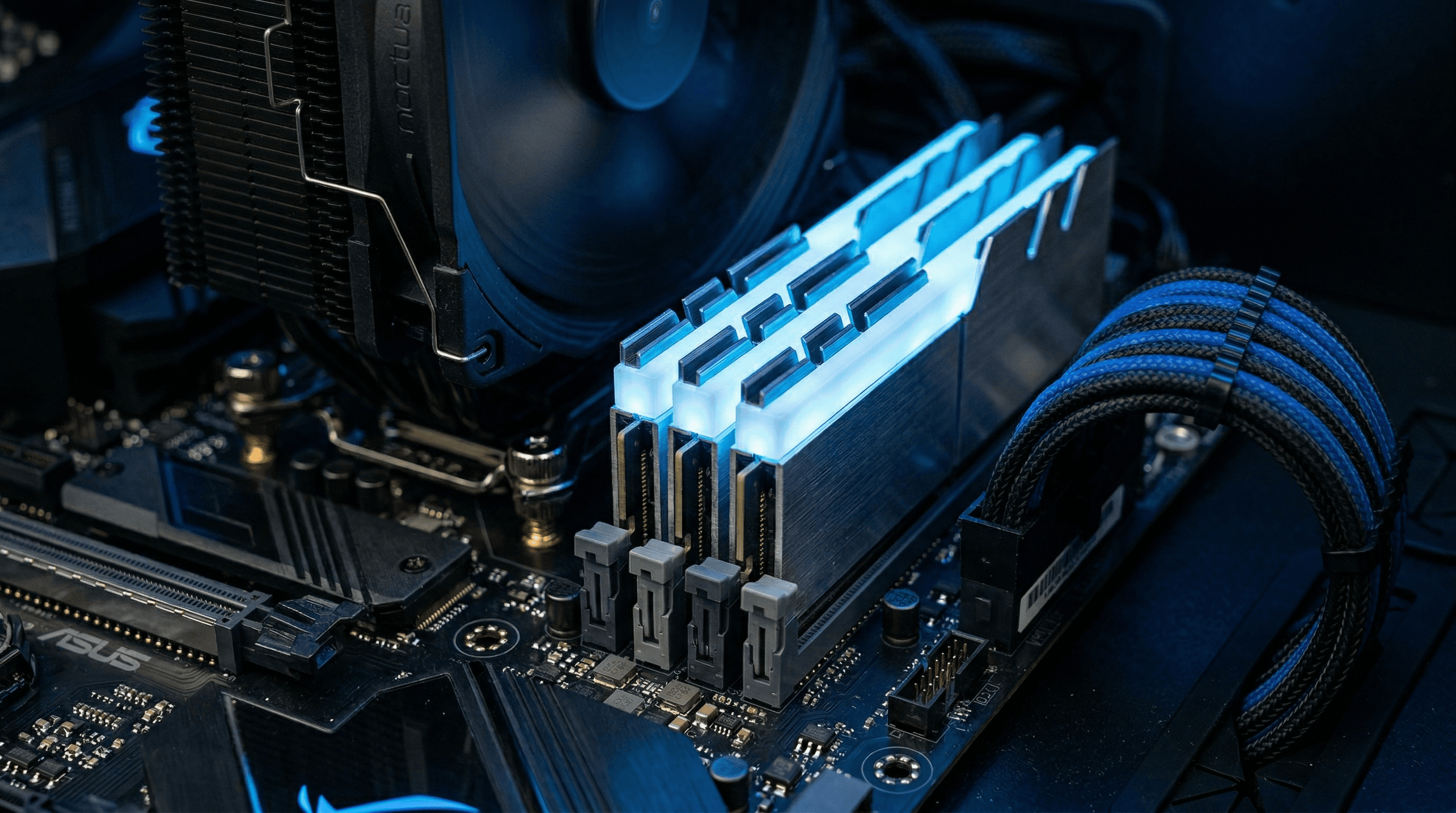

The Rise of the Personal Supercomputer

The most disruptive aspect of Qwen 3.5-27B is its accessibility. An unquantized version—meaning a version running at full precision without any loss of quality—requires roughly 54GB to 64GB of RAM. This fits perfectly within the specifications of modern high-end consumer hardware, such as the Apple M3 Ultra Mac or the AMD Strix Halo PC. This shift allows users to run a frontier-class model locally at zero API cost, ensuring complete data privacy and removing the need for third-party subscriptions. \n\nIn addition to hardware synergy, software like LMStudio has evolved to capitalize on these developments. These tools now allow users to create 'Clawdbots'—local agents that can be accessed securely over the internet. By hosting Qwen 3.5-27B on a home machine, a developer can effectively have a private, high-speed assistant available on any device, anywhere. This decentralization of AI power poses a significant challenge to the business models of tech giants who rely on heavy capital expenditure and centralized control. \n\nMarket analysts are already questioning if we are seeing the beginning of a 'Capex Bubble.' If a 27B Chinese model can match the utility of massive U.S. flagship models, the justification for multi-billion-dollar GPU clusters becomes harder to maintain. Furthermore, with rivals like DeepSeek V4 on the horizon, the pressure on Western AI firms to innovate beyond sheer parameter count has never been higher. Qwen 3.5-27B is not just a benchmark winner; it is a signal that the future of AI belongs to the efficient.

The Impact of Qwen 3.5-27B

Xcode 26.3 Arrives: How Claude Agent and Codex Change Development

With the release of Xcode 26.3, Apple integrates Claude Agent and Codex, marking a significant shift from simple code completion to active architectural reasoning.

More Intelligence Less Compute Alibaba Unveils Qwen 3.5 Series

Alibaba’s Qwen 3.5 release marks a turning point in AI development, offering frontier-level intelligence with significantly reduced computational requirements.