The Year of Quantum Engineering: Why 2026 is the Turning Point for Subatomic Power

From laboratory curiosities to industrial tools, quantum processors are finally outperforming supercomputers in the real world.

For decades, quantum computing was a technology perpetually stuck ten years in the future. In 2026, that narrative has finally shifted as the industry moves from laboratory experimentation to verifiable industrial utility. This year, we have stopped asking if quantum computers will work and started asking how quickly they can be integrated into the global supply chain.

The End of the Error Era

The most significant hurdle for quantum progress has always been 'noise'—the tendency of subatomic particles to lose their state before completing a calculation. In early 2026, a collaboration between Microsoft and Quantinuum shattered this barrier by demonstrating logical qubits that are 800 times more reliable than the physical qubits they are built upon. This milestone signals the beginning of the fault-tolerant era, where machines can finally perform long, complex calculations without collapsing into errors.

While reliability has improved, raw speed has also seen a dramatic leap. Google’s latest hardware, the Willow chip, recently executed the 'Quantum Echoes' algorithm to perform molecular simulations 13,000 times faster than the world’s most powerful classical supercomputers. This isn't just a theoretical speedup; it is a repeatable, beyond-classical accuracy that allows researchers to model chemical reactions that were previously impossible to simulate.

These breakthroughs have led to what experts call 'Verifiable Quantum Advantage.' Unlike previous claims of quantum supremacy that involved abstract math problems, the current results focus on molecular structures relevant to drug discovery and material science. We are no longer just proving that these machines are fast; we are proving they are useful for solving the world's hardest physical problems.

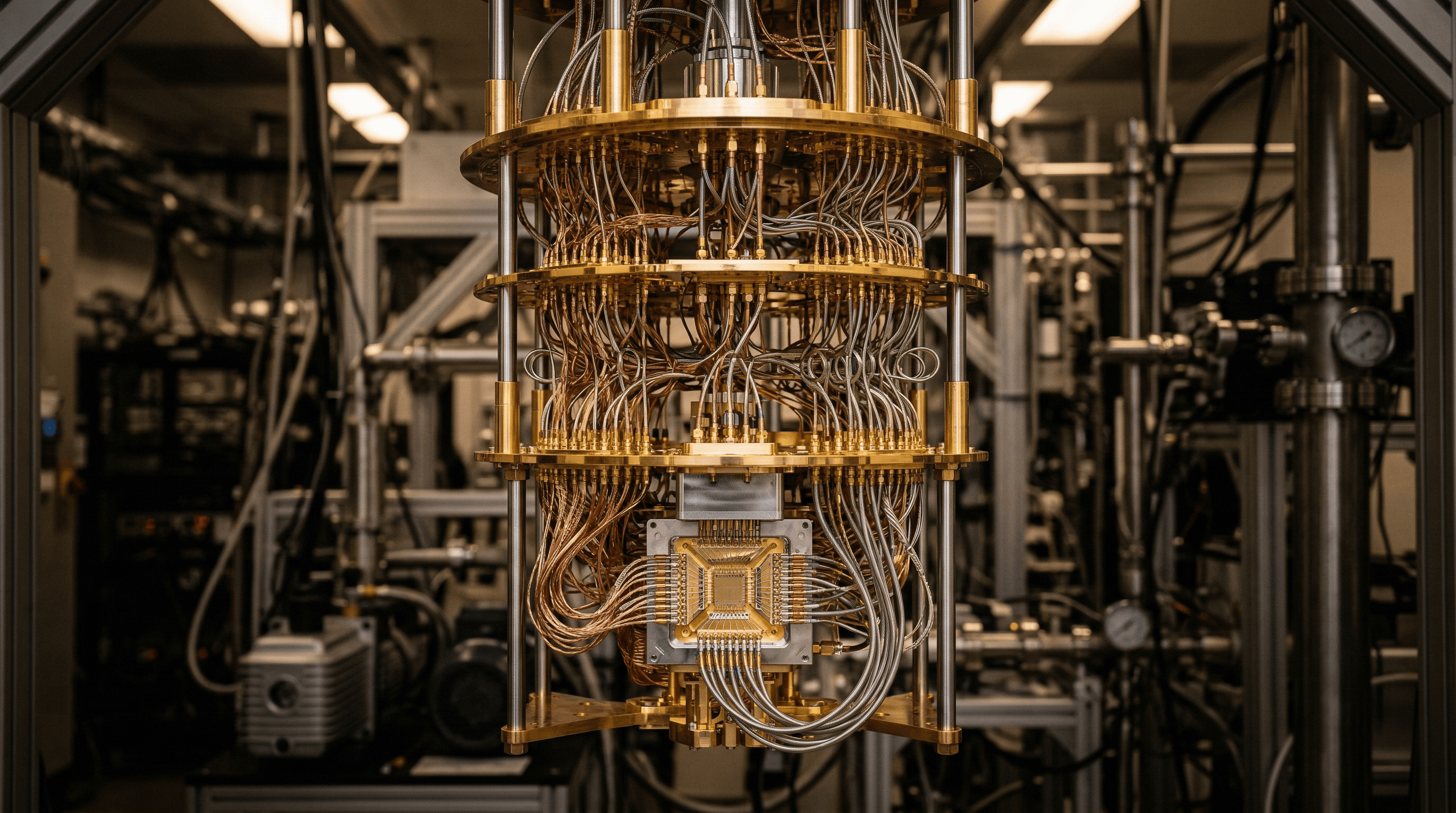

Scaling the Unthinkable: Hardware and Heat

As the reliability of individual qubits improves, the challenge has shifted to scaling these systems without creating a massive footprint. IBM’s Nighthawk processor has become the gold standard for performance, achieving 330,000 Circuit Layer Operations Per Second (CLOPS). This 65% performance increase over 2024 models demonstrates that quantum chips are becoming more efficient at handling the high-volume data streams required for modern enterprise applications.

Another major breakthrough involves the 'wiring bottleneck.' Traditionally, as you add more qubits, the tangle of wires required to control them at near-absolute zero temperatures becomes unmanageable. D-Wave recently demonstrated on-chip cryogenic control, moving the necessary electronics inside the cooling unit itself. This innovation allows for thousands of qubits to reside in a much smaller physical space, clearing the path for the massive, utility-scale facilities currently being built in Chicago and Brisbane by companies like PsiQuantum.

Looking further ahead, scientists at the Norwegian University of Science and Technology have identified a triplet superconductor known as NbRe. This material could potentially allow quantum processors to operate with near-zero energy loss. While still in the early stages, such material science breakthroughs hint at a future where the massive cooling requirements of today’s quantum computers might eventually be replaced by more sustainable, energy-efficient designs.

The Invisible Revolution: Bringing Quantum Power to Everyday Devices by 2030

By 2030, quantum technology will move from specialized labs into consumer electronics, transforming security and sensing without the need for extreme cooling.

Beyond the Sandbox: Mastering Local Notification Testing in 2026

Transitioning from code to a user's lock screen requires more than just logic; it requires a robust local testing suite that bridges the gap between private machines and cloud services.