AI

AIGPT-5.4 CODEX Leads The Field In Deep Architectural Reasoning

Achieving a 35.48% score on the brutal SWE Atlas benchmark, GPT-5.4 CODEX reveals a massive gap between coding and true systems thinking.

The bar for AI software engineering just moved, and it is significantly higher than most realize. While frontier models have been bragging about 80% success rates on standard coding tasks, GPT-5.4 CODEX (xHigh) has just set a new standard on the far more difficult SWE Atlas benchmark with a 35.48% accuracy score. This performance highlights a critical transition: from simple code-patching to the daunting task of understanding complex, multi-file system architecture.

Beyond The Tactical Patch

For months, the industry has been obsessed with SWE-Bench Pro, which tests whether an AI can act as a junior developer—locating a bug and writing a surgical fix within a specific file. But as researcher @chatgpt21 noted, this tactical prowess is deceptive. The real bottleneck for AI in production environments isn't just fixing a typo; it is navigating the labyrinthine nature of modern software architecture where a single change can trigger a cascading failure elsewhere.

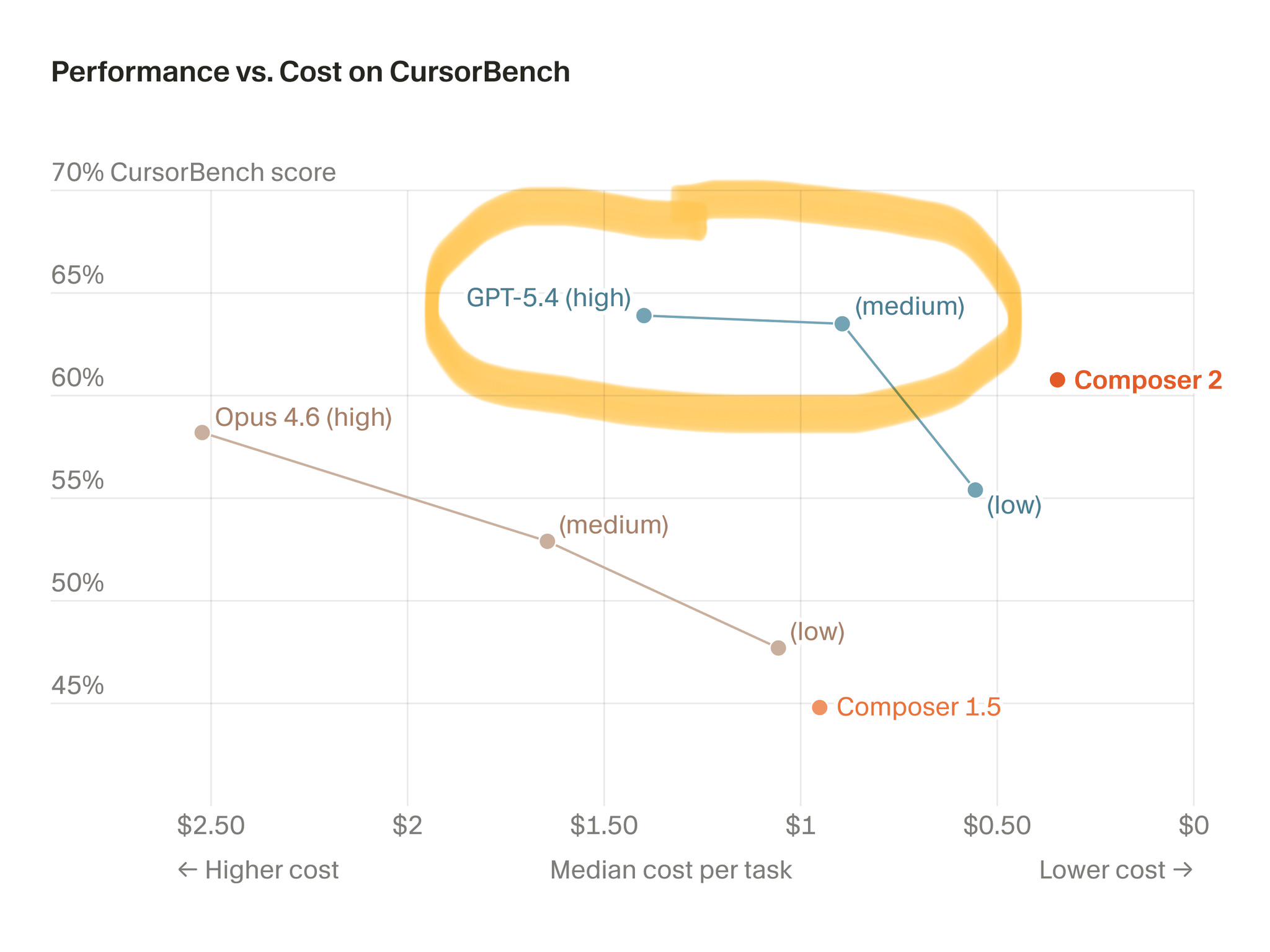

This is why SWE Atlas is the new 'boss fight' for models. It forces an AI to explore a sandbox repository, run experiments, and perform deep analysis across multiple interconnected files to answer high-level architectural questions. In this domain, the top models aren't hitting 80%; they are struggling to break the 35% barrier. GPT-5.4 CODEX’s ability to edge out Claude-Opus 4.6 in this arena proves that the race for 'autonomous engineering' is moving from pattern matching to genuine reasoning.

The Strategic Future Of Coding

Think of this shift like the evolution of chess engines. Early AI benchmarks were like tactical puzzles—find the best move in a static scenario. SWE Atlas is the equivalent of requiring an engine to play a full positional game, evaluating the long-term health of the entire software ecosystem rather than just the next move. This is the difference between an AI that functions as a sophisticated autocomplete and one that acts as a true engineering partner.

Looking ahead, this massive performance gap—from 80% on tactical tasks to 35% on architectural ones—defines the next frontier of AI development. If we want AI to eventually manage long-horizon software projects, labs must move beyond simple parameter scaling. We need models that demonstrate better error recovery and iterative planning. The models that solve this won't just be faster; they will be the first ones truly capable of building and maintaining our most complex digital infrastructure.

The Evolution Of AI Engineering

Keep reading

AI

AICarlos Santana Predicts Full Jarvis Mode AI is Within Reach

The dream of a truly intelligent, always-on AI assistant akin to Marvel's Jarvis is rapidly becoming real, fueled by powerful autonomous agents and seamless mobile integration.

AI

AIGoogle Research Unveils TurboQuant to Accelerate LLM Efficiency

TurboQuant solves the fundamental trade-off between speed and intelligence, allowing large language models to run significantly faster on the same hardware.

AI

AISam Altman Pivots OpenAI to Massive Datacenter Infrastructure Strategy

OpenAI has finished the heavy lifting on its next major model, 'Spud,' signaling that the future of the AI arms race will be fought in the datacenter, not just the code editor.