AI

AIAnthropic Adds 'Auto Mode' to Claude Code to Combat Approval Fatigue

After finding that developers manually approved 93% of AI prompts, Anthropic is turning to a new AI-governed safety layer.

Every developer who has used an AI coding assistant knows the rhythm of the 'approval loop': the AI asks for permission, the developer clicks 'Yes,' and the cycle repeats. It is a necessary friction, but it is also a productivity killer that leads to a dangerous phenomenon: approval fatigue. Anthropic is now attempting to break this cycle with the introduction of 'Auto Mode' for Claude Code, a new safety-focused layer that allows the AI to make its own decisions about routine tasks while keeping its guard up for the risky ones.

Finding the Middle Ground

Historically, Claude Code users were faced with a binary choice. You could either require manual approval for every file write and bash command—which slows down complex, multi-step refactors to a crawl—or you could flip a switch to 'dangerously-skip-permissions,' the metaphorical nuclear option that effectively removes all safety boundaries. Anthropic’s data revealed that this manual system was far from perfect; users were approving 93% of requests, suggesting that the human-in-the-loop process had become more of a reflexive habit than a genuine security check.

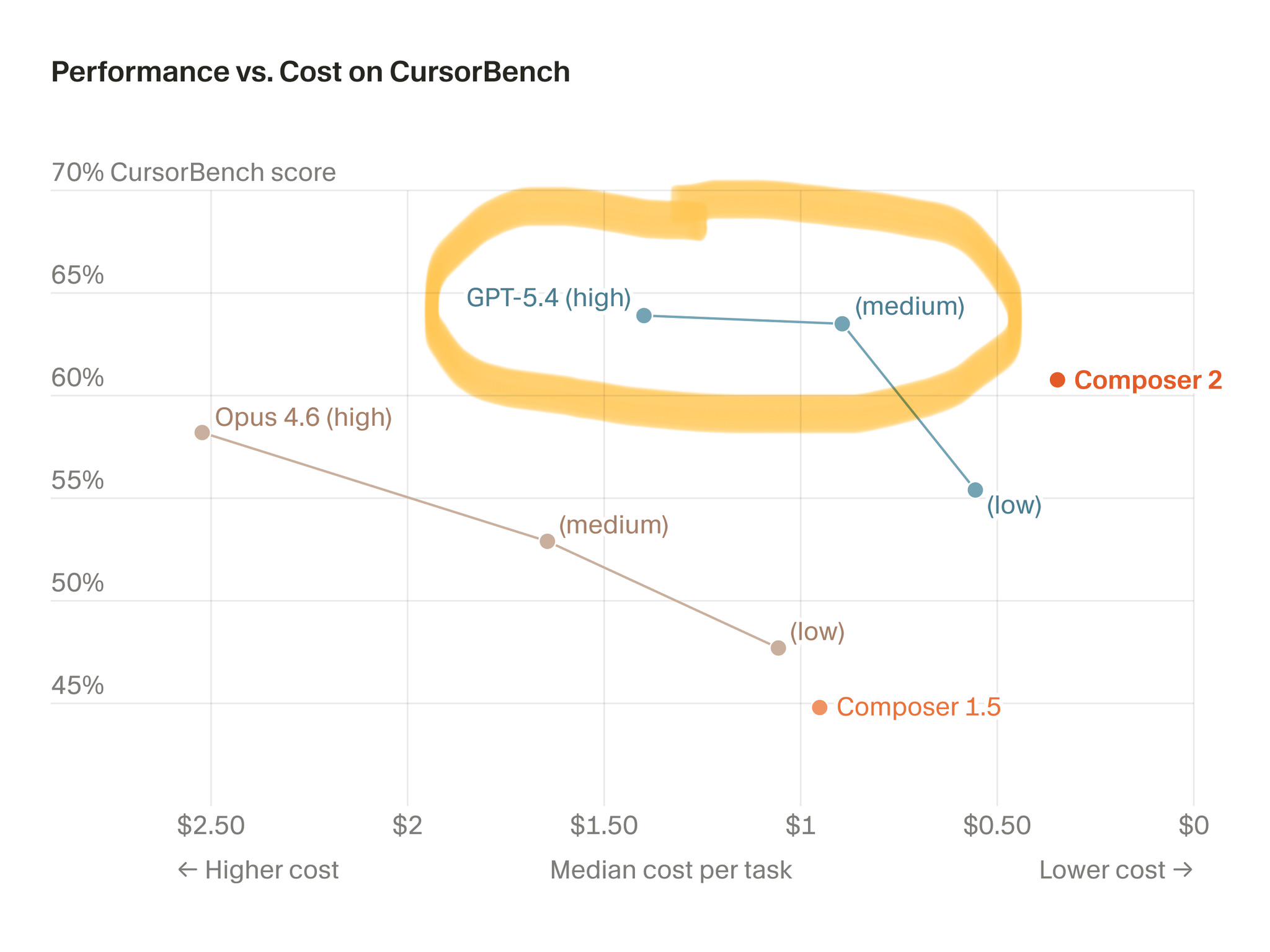

Auto Mode sits in the middle. It uses a specialized classifier, powered by Claude Sonnet 4.6, to evaluate tool calls in real-time. Crucially, this classifier operates with a 'conservative bias.' If it detects ambiguity or a potentially sensitive command, it errs on the side of caution and pulls the human back into the loop. It is a system designed not to blindly trust the AI, but to intelligently triage the AI's intent.

The Path Toward Agentic Autonomy

The true significance of this release isn't just about saving time; it's a fundamental shift in how we build and trust autonomous agents. By replacing the constant prompt-approval cycle with an intelligent, AI-governed gatekeeper, Anthropic is moving toward a future where developers can delegate deep, multi-step technical tasks to software without having to hold its hand every step of the way. This is the difference between a tool and a colleague.

Of course, relying on an 'opaque' classifier to make security decisions carries its own set of risks. As these systems become more capable, the challenge will be providing transparency so that enterprise teams can verify that these 'autonomous agents' are behaving as expected. For now, the takeaway is clear: the era of the 'babysitting' interface is ending. We are entering an era of managed risk, where the AI is expected to show its work, and the human is expected to oversee the outcome rather than every single keystroke.

Evolution of AI Coding Agents

Keep reading

AI

AIGoogle DeepMind's Gemini 3.1 Flash-Lite Builds Websites On-the-Fly

Google DeepMind is replacing static web design with generative, ephemeral interfaces that adapt to user intent instantly.

AI

AIGPT-5.4 CODEX Leads The Field In Deep Architectural Reasoning

While AI can fix a bug in minutes, the new SWE Atlas benchmark shows they are still struggling to think like senior software architects.

AI

AIMistral AI Unifies Reasoning, Vision, and Coding in Small 4

Mistral AI’s latest release, Mistral Small 4, merges four distinct model families into a single powerhouse, signaling a major shift toward consolidated, high-efficiency AI infrastructure.